Activation Atlases – A new way of peering into the ‘black box’ of neural networks

Deep learning and artificial intelligence is the most hyped buzzword these days and it has attracted a great deal of capital and research interest across all the major technology hubs in the world. Not just companies, even countries have come up with AI strategies and vision documents!

AI conquering games such as chess, Go and even Starcraft II has proved the capabilities of deep learning. But, a major task where deep learning has made tremendous improvements in computer vision. Machines trained with deep learning can now perform better than humans in image recognition and classification tasks. Convolutional neural networks (CNNs), a variant of deep learning are used for these tasks. Neural networks have become the de facto standard for computer vision tasks and are used in a variety of real-world scenarios ranging for automated tagging of photos to high-end autonomous driving applications.

While these neural networks perform above human level capabilities, the networks learn in an automated training process which means that how they really go about their decision making remains a mystery. This really spooks engineers who want to assure the safety of their algorithms in safety critical situations. This has limited the use of deep learning in such critical areas as its almost impossible to assure the safety of the model over time as we are not sure if it has learnt some associations which it should not have.

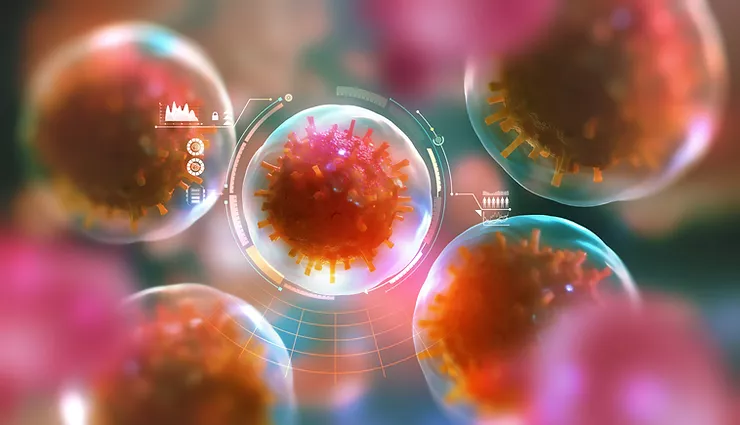

To allow a peek into the black box of neural networks, research at Google AI and Open AI collaborated to develop ‘Activation Atlases’. This is a new technique for visualizing interactions between neurons in neural networks. They have released a paper as well as demo software for the researcher to test the new technique. As OpenAI mentions, “They give us a global, hierarchical, and human-interpretable overview of concepts within the hidden layers. Not only does this allow us to better see the inner workings of these complicated systems, but it’s possible that it could enable new interfaces for working with images.”.

A vast majority of AI research has been focused on the quantitative evaluation of network behavior like the accuracy of the model. As AI systems are deployed in increasingly sensitive contexts, having a better understanding of their internal decision-making processes will let us identify weaknesses and investigate failures. It has been observed by some researchers that although these techniques are highly accurate for image recognition, slight changes in the scene can cause great distortions in output in some cases. Why does this occur and what are the unsuitable correlations which it has learnt is still an unknown. This advance of visualization of the correlations has led to a new area of understanding the inner workings of the neural network allowing an audit for safety-critical cases.

This has opened a new research direction for researchers who would want to further develop comparisons between different types of neural networks and training methods. It could also help in making the neural networks better as the way to spoof the network can be figured out from the inner workings itself! The researchers also show a possibility of generating many nuances images from the learning as well! It also shows that large AI groups are willing to come together for AI research related to safety which is a very good sign for the advancement of the field.

To deep dive and stay continuously updated about the most recent global innovations in Artificial Intelligence and learn more about applications in your industry, test drive WhatNext now!

Leave a Comment